This One Weird Trick Fixes Impostor Sydrome and Ruins Everything Else

Ah Impostor Syndrome, the bad guy for the awesome Disney movie The Incredibles. Either that or fearing you’re not good enough to be where you are, that your success to this point is a fluke, and that you’ll soon be discovered. I’d rather talk about the first one because I’m a bit of a movie nerd and it would be a lot more light hearted and fun, but then my title wouldn’t make any sense.

So I’ve got a sure fire yet pretty unrealistic way for most people to defeat that super villain: having actual super powers that make you practically invulnerable.

OK, fine, enough about the movie, but it is an incredible movie.

So I’ve got a sure fire yet pretty unrealistic way for most people to defeat this superego villain: faking confidence and never admit you aren’t sure of yourself.

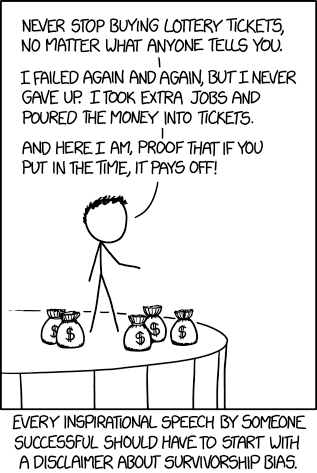

Look, I’m not saying this is a particularly good or healthy way to deal with Impostor Syndrome, but it’s probably the most common and often only outwardly apparent one I’ve seen. Fake it ‘til you make it. Believe in yourself. Do or do not there is no try. And other things you hear from people with survivorship bias.

I’m not saying that faking confidence and those catchy slogans are all bad advice, but if faking confidence is basically all you have to cope with what can be pretty overwhelming feelings, to me it seems like a sign of an unhealthy culture. Frequently when I’ve seen people pursue other outlets, like actually admitting to being afraid or expressing doubt, they basically get shit on in some way. Most people who care about advancing quickly learn to fake confidence because that’s what they see people doing at higher levels. However, not everyone aspires to those higher levels or can convincingly pull off conning others or even themselves. Often people get burned a few times before they learn how to do it.

For example, in one of my first jobs with a lot of apparently very smart people and early in my new role I was listening to a conversation being held in a group setting. It was very high level and involved a lot of terms I wasn’t fully understanding, so I asked what I considered to be a clarifying question. However the response basically amounted to a judgemental insinuation that I not interrupt while the adults were talking about important things. I learned from that not to learn by asking questions, but to learn the way I saw others around me communicating, which mostly amounted to bullshitting to sound smart and see if anyone sounded smarter with their bullshit. It’s not exactly the kind of communication style you’d expect to breed productivity and trust.

Another example I’ve seen was a coworker who was very smart and competent, especially for the relatively short amount of time they’d been working in the field, but they were afraid to deploy changes to a vitally important and sensitive production system. I consider fear when touching such systems to be a totally reasonable and normal thing even after years of doing it. I think they trusted me enough to admit this, but I know from conversations that had recently happened around their performance review that it was something they wouldn’t admit widely for fear that it would be used to justify not advancing them. So they basically had to pretend they weren’t worried about making these changes even though from my perspective someone not being worried would be worrisome.

One final example I’ve seen was a manager whose competence seemed to be questioned when they tried to sound the alarm early on an important project that was obviously not going to hit an existing, totally arbitrary, poorly planned out deadline. As a result it seemed easier just to agree to the deadline while using a bunch of weasel words. Missing deadlines (sometimes euphemistically referred to as estimates even when very little estimating was done to arrive at the date) was a common occurrence in the department, but the standard operating procedure seemed to be just to agree to unrealistic timelines while faking confidence, and then when those timelines weren’t met to justify it after the fact. While higher ups were mostly forgiving of missed deadlines, the practice of constantly missing them was de-motivational, and attempting to improve the estimation process by being reasonable up front led to unfavorable reactions from higher ups.

So faking confidence in those situations led to an unwillingness to ask questions, an unwillingness to ask for help when making dangerous changes, and an unwillingness to make reasonable estimates. At least people didn’t have to admit to their fear or impostor syndrome though (sarcasm emoji here if one existed).

Obviously there are tons of articles on better ways of dealing with fear and impostor syndrome, but in my ideal world faking confidence wouldn’t be such a prevalent one. So more than helping people who struggle with these issues, I hope what I’ve written could help those in positions of power to see how creating an environment where necessary false confidence leads to negative outcomes. Often those in positions of power either went through faking it to get where they are so they assume it’s something like a right of passage for others. Or they’re incredibly privileged and possibly delusional so that they were totally confident from the beginning and they can’t identify with people who feel like impostors. However forcing others to fake confidence leads to poor communication, risky behavior, and unrealistic expectations.

Admitting to experiencing impostor syndrome is helpful to others so they don’t feel alone, but actually doing so is something usually done from a privileged position. I definitely experience impostor syndrome, but much less strongly than others I’ve known, and as an educated, white male with more than a decade in my profession I don’t worry that admitting to it will hurt me much. Less privileged people such as minorities or people early in their career rightly won’t feel as comfortable talking about it unless they’re made to feel so by the people around them.

When I’ve been in managerial positions I’ve definitely tried keep this in mind and build relationships of trust with those I’ve managed so that talking about these kinds of things would be possible. Building that trust can take some time. I’m sure I wasn’t always as effective at it as I could have been, especially when I first started managing and felt like an impostor in that role. I hope that along the way I made it easier for someone to cope with their impostor syndrome without just pretending it didn’t exist, and that by writing this others might consider how they can do the same.

So if you’re noticing people on your team oozing riduculous amounts of confidence, to the point where you know they’re full of it, realize they’re coping and let them know about what were likely years where you were constantly screwing up and learning your way as well. Make it OK for them to tell you when they’re scared or nervous about their work and they’ll repay with you with honest communication, insightful questions, responsible actions and reasonable estimates. Alright, that last thing might not exist, but at least they’ll try.

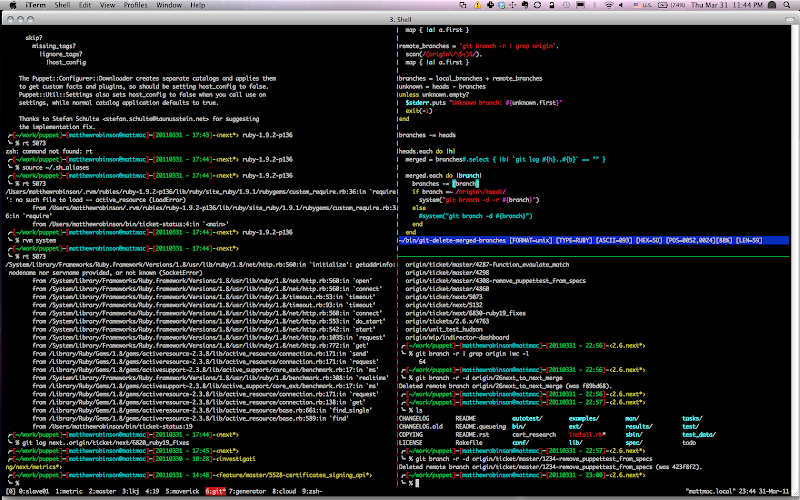

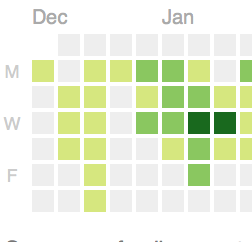

the rails. For me an easy to measure metric, with respect to work at least, is

my commit history frequency. If I notice my commit history has greatly slowed

or ceased for a period it’s a bad sign.

the rails. For me an easy to measure metric, with respect to work at least, is

my commit history frequency. If I notice my commit history has greatly slowed

or ceased for a period it’s a bad sign.